I read the new DCC homepage and wanted to provide some introductory remarks about Sparsey and address the four distinguishing characteristics of DCC.

Sparsey originated as my PhD thesis work in the early 90s, culminating in my 1996 Thesis. The theory was then called TEMECOR, standing for "Temporal Episodic Memory using Combinatorial Representations. I use “sparse distributed representation” (SDR) and “sparse distributed code” (SDC), as synonyms for “combinatorial representation”, and will use SDC hereinafter. SDC corresponds closely to Hebb’s concept of a cell assembly in neuroscience.

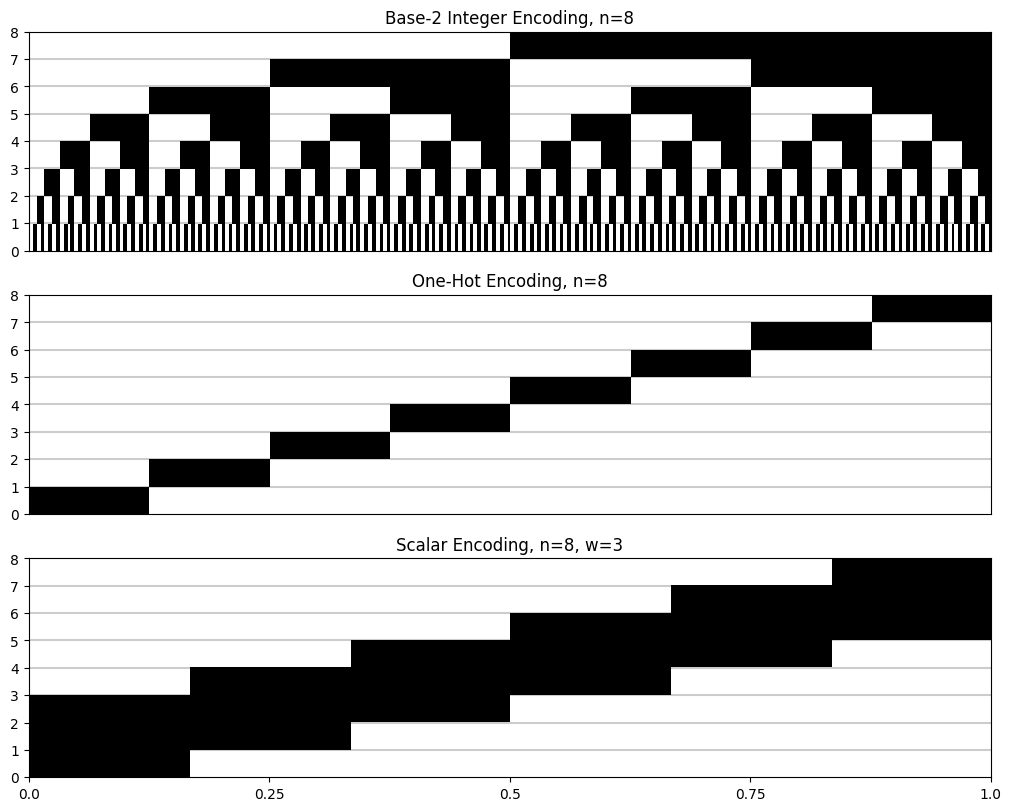

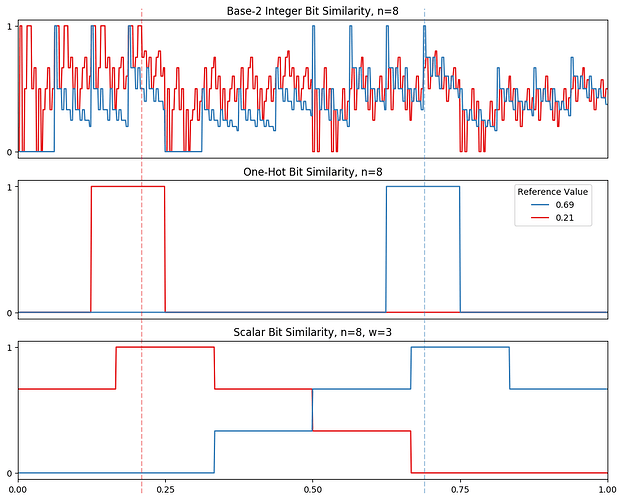

A Sparsey coding field (CF) is a set of Q WTA competitive modules (CMs), each comprised of K binary neurons. Every code that becomes active in a CF will consist of exactly Q active neurons, one chosen from each of the Q CMs. This meets DCC characteristics, 1 and 3. Although you could call this a “k-WTA” (or rather, a Q-WTA) code, there are computational advantages of enforcing a precise code size (weight) by organizing the CF as a set of Q independent WTA modules within each of which one winner is chosen, rather than as a flat field of QxK units from which Q are chosen (as HTM does). My Medium article, “A Hebbian cell assembly is formed at full strength on a single trial”, provides further discussion relevant to DCC characteristic 3 (though note that the CFs in the article are not broken modular, i.e. not broken into Q WTA CMs, but are rather just flat field: the modular structure was not needed for the article’s purposes).

Regarding DCC characteristic 2, much of Sparsey’s functionality is achieved using only binary synapses (weights). However, in the full Sparsey model, the weights are in fact coarsely graded. Broadly, this “decay” functionality is needed so that inputs which are stored, i.e., for which codes (SDCs) are assigned, but which are in fact just noise, or in some way spurious (i.e., not reflective of the actual statistics of the input space), can be forgotten. Because Sparsey is designed to natively do singe-trial learning (because it’s initial goal was to explain human episodic memory), it requires some general (unbiased) policy for forgetting noisy/spurious inputs. With that in mind, the learning policy is this. a) All weights are initially zero. b) Whenever both the pre-synaptic and post-synaptic units are co-active (i.e., a “pre-post coincidence” occurs), the weight is set to its maximum value, i.e., effectively to binary “1”. And c) A weight’s value can decrease over time if further pre-post coincidences do not occur sufficiently often. The number of weight values between binary “0” and binary “1” that are needed to achieve the forgetting functionality described above is still to be explored, but is probably quite small, e.g., 5-10, for almost all applications (i.e., to explain almost all human memory phenomenology).

Just to flesh out the discussion, one major reason why a distributed memory (of which DCCs are a subclass) should not permanently store all inputs that ever occur (the majority of which might in fact be noisy/spurious) is that it would cause the memory to fill up too fast (i.e., all, or too high a fraction of, the weights would saturate at “1”), which would effectively erase (render irretrievable) all of the information (codes) stored in the memory (cf. catastrophic forgetting). However, while it’s true that a memory needs to forget noisy/spurious (essentially, useless) inputs, it generally also needs to permanently retain inputs reflective of the statistical (ultimately, the physical/causal) structure of the input space (i.e., of the physical world generating those inputs), i.e., useful information. But those latter inputs are, by their nature, more likely to recur (or approximately recur), than the former (noisy/spurious) inputs. This is precisely because they are generated by the structural regularities in the physical world that gives rise to the inputs. This suggests the need for a second parameter of the weights, which I call permanence, which governs the rate at which a synapse’s weight decreases as a function of the temporal history of the pre-post coincidences it experiences. So, the last part of the policy is: d) all weights initially have permanence zero, but permanence increases monotonically and quickly (i.e., after relatively few, sufficiently frequent pre-post coincidences) to a high asymptote, corresponding to the condition where the weight permanently remains at binary “1”.

Sparsey conforms to DCC characteristic 4, but this is not a strong constraint. Virtually all natural inputs spaces/processes that have ever been or are currently represented on digital computers are necessarily represented discretely, i.e., bit patterns.

This initial remark on Sparsey is really only intended to address the four DCC characteristics. I’ll try to make separate posts to describe Sparsey’s core algorithm, the code selection algorithm (CSA), which is its method for choosing the SDCs to be assigned to inputs.